Saviynt Intelligence Updates; P0 Security $15m Funding; Zero Trust and Access Tokens; Sensitive Data + IAM at Google; Low Code Policy Creation

Saviynt Joins The Identity Security Party

Saviynt, the identity cloud provider, has announced a major update to both it’s product and narrative, with the release of their Intelligence Suite. The upgrade is to deliver three major areas of capability to assist with reducing the effort and inaccuracy associated with access review.

The new capabilities will include Access Recommendations, an AI Co-Pilot and a user Trust Scoring process. The use of co-piloting seems an obvious evolution for the ever increasing workload associated with access governance - for both people and workloads.

A lack of context, role and permissions descriptions, activity and usage data have all contributed over the years to poor execution associated with how organisations handle access review - ultimately leading to bad approval decisions and no-net change to associated permissions and risk.

“The Identity landscape is changing rapidly. With the explosion of identity types and events in an organization, both in terms of volume and velocity, we are delivering an intelligent platform which can meet the identity security needs of our customers and partners for the next decade,” said Vibhuti Sinha, Chief Product Officer, Workforce Identity and Intelligence at Saviynt. “Identity platforms need to recognize the business needs of an organization and user. They need to do the heavy lifting of figuring out the necessary integrations, assigning just enough access, applying mitigating controls or security checks with zero to minimal inputs. That is our northstar and that's what we are delivering."

The IGA problem statement is getting larger and we need to think smarter about how to handle that.

P0 Security Raises $15 million To Deliver Cloud Access

P0 Security, the startup looking to deliver cloud access governance has recently announced a $15 million funding round. They focus on the combined trifecta of IGA (identity governance and administration) and PAM (privileged access management) from a purely cloud point of view.

The press release continues with further validation the the IGA problem statement is getting bigger:

“Legacy approaches to access governance and identity security relied on network boundaries. In today’s cloud-native environment, the explosion of cloud resources, data locations and identities—both human and non-human—are exponentially expanding access paths to sensitive data and critical infrastructure, rendering traditional methods ineffective.”

This is essentially the same pattern being taken by most IGA vendors (see Saviynt above) with respect to the number and types of identities requiring governance is increasing.

A ongoing community survey The Cyber Hut is currently running amplifies that both IGA and NHI management are key areas for disruption for 2025.

Part of this initial funding round is ZScaler.

Zero Trust and Access Token Architectures

B2E and B2C platform provider SecureAuth have released an article discussing how to improve the security of access token architectures. I wont lead by explaining what zero trust is. You can Google that one. I do want to put a comment on access tokens though. The use of OAuth2, OIDC and JWT with API landscapes is common. What SecureAuth are amplifying is how to overlay some of those key ZTNA (zero trust network architecture) concepts into this specific landscape.

So why is that important?

“In a token-based architecture, the token itself becomes the primary attack surface. It’s common for a token to be used across multiple applications of varying sensitivity. Once inside the system, a single token might grant access to numerous applications. This widespread use increases the attack surface, but you can mitigate this risk by implementing Zero Trust mechanisms. Let’s explore how to apply these principles effectively.”

The interesting aspect of course, is whilst the human-centric identity world has developed key practices for things like proofing, verification, strong (often multi-factor) authentication and authorization design patterns, transferring that across to the non-human and workloads world can be more tricky.

Some obvious things to consider, is that the access token essentially becomes a powerful concept that adversaries want to either steal, interfere with or alter how it can be verified. How are the tokens issued? Where are they are stored? How are they verified? What properties and claims are bound to them? SecureAuth amplifies some decent best practices focused on segmentation, blast radius reduction and monitoring.

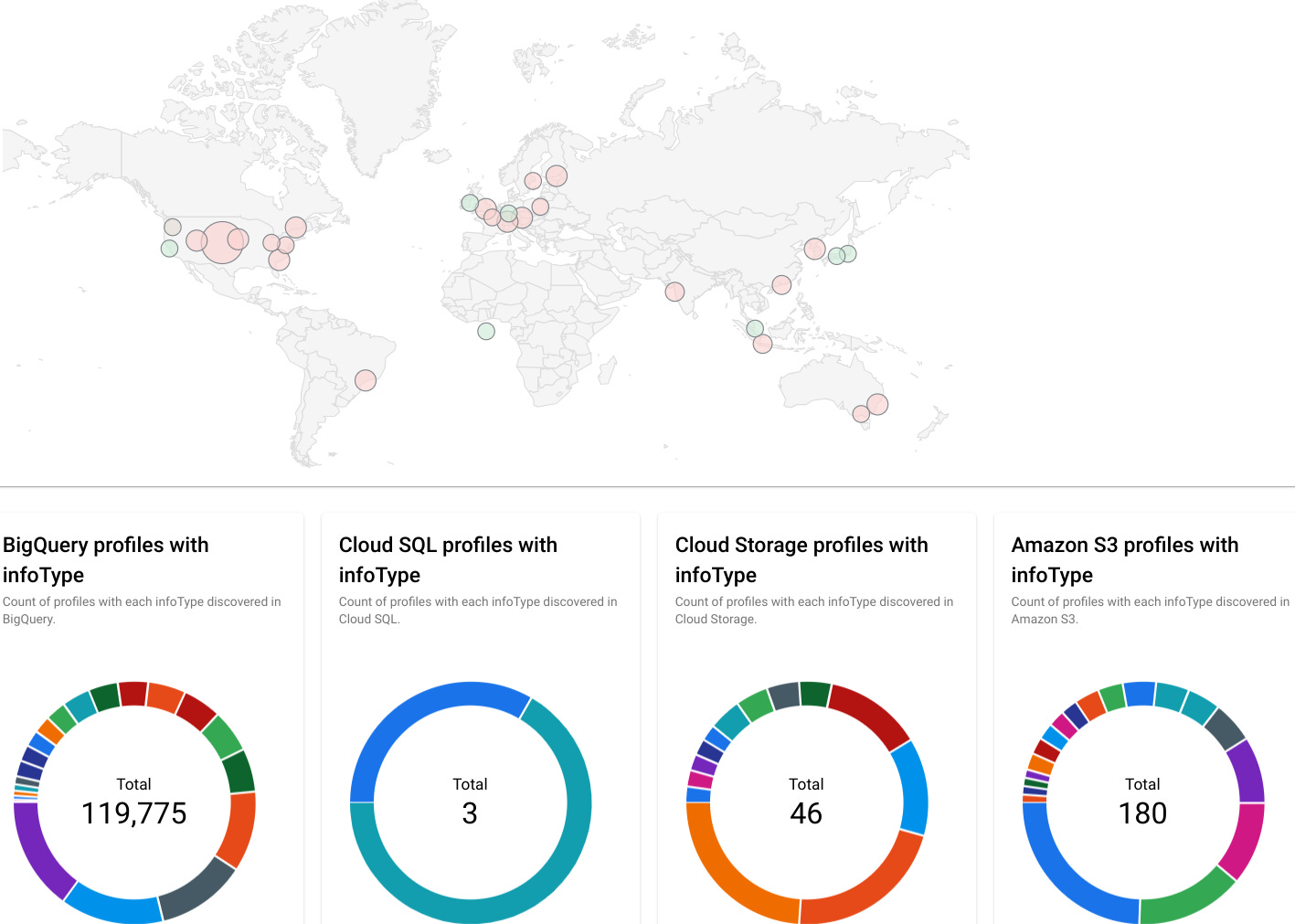

Google + Sensitive Data = Automated IAM

Google as a CSP (cloud service provider) has a pretty hefty kit bag of data, identity and security tooling and they have recently released a blog that knits together two components in this area - identification of sensitive data and then what to do about that from an identity and access management point of view.

You can’t protect what you can’t see of course, so knowing where “sensitive” things are is a likely good starting point. First up, they have a function aptly named Sensitive Data Protection that does just that: it scans data and assigns tags.

Tags are hugely useful when it comes to access control policy, as the object tagging can be used in combination with the analysis of actions (what you can do against an object) and also analysis of the subject (the thing trying to access said object). It can provide context essentially, which in turn can help deliver adaptive access. Adaptive meaning the access eval and in turn response is flexible based on this context.

“Sensitive Data Protection can look for evidence of sensitive data such as personally identifiable information, secrets, medical information, financial documents, and more. Discovery uses this technology to continuously monitor your data footprint looking for new assets and critical changes in existing assets that might increase or decrease risk to your business. “

Image Source: Google Sensitive Data Protection

This tagging mechanism essentially feeds into the IAM Conditions capability which can then leverage the tags as part of policy design and evaluation.

Of course the major benefit is the ability to automate - both the discovery and policy creation - which Google claims to support.

Certificate Management → Credential Management

Following on the thread of zero trust and non-human and workload environments, workload identity provider SPIRL have a succinct blog talking about some practical aspects associated with credential management.

They make the evolutionary claim that we essentially need to move away from the traditional way of thinking about certificate management (discovery, issuance, revocation and the all PKI headaches that used to bring) to thinking more about certs as credentials - and the differences that entails.

They amplify the focus being on SPIFFE as the vehicle that can help with the naming of workloads as well as the mechanism to assist in the background issuance and more importantly rotation of the possession factors being used to authenticate these non-humans.

Low-Code Policy Creation

Authorization is having a real renaissance this past 3 years, with numerous new approaches, startups and domain specific languages to help from both a policy management and enforcement point of view (including a a recent webinar The Cyber Hut conducted with PlainID).

To that end, Styra the organisation behind Open Policy Agent (OPA) has updated its offering with a low-code effort to help in the design of application-level authorization logic.

“Enter the Enterprise OPA Platform’s low-code policy builder. Now available for early access, it is a powerful tool that empowers product owners and security analysts to design, review, and experiment on application permission logic directly, while collaborating with application developers as needed. This approach offers a more agile and efficient way to manage permission logic, addressing the key challenges that enterprises face.”